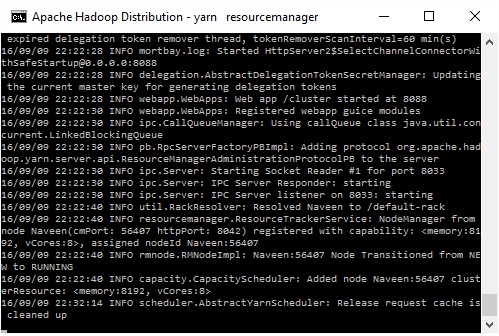

STARTUPMSG: java = 1.8.0101./ 16/09/09 22:22:05 INFO namenode.NameNode: createNameNode 16/09/09 22:22:08 INFO impl.MetricsConfig: loaded properties from hadoop-metrics2.properties 16/09/09 22:22:08 INFO impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s). 16/09/09 22:22:08 INFO impl.MetricsSystemImpl: NameNode metrics system started 16/09/09 22:22:08 INFO namenode.NameNode: fs.defaultFS is hdfs://127.0.0.1:9000. STARTUPMSG: java = 1.8.0101./ 16/09/09 22:22:13 INFO impl.MetricsConfig: loaded properties from hadoop-metrics2.properties 16/09/09 22:22:13 INFO impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s).

16/09/09 22:22:13 INFO impl.MetricsSystemImpl: DataNode metrics system started 16/09/09 22:22:13 INFO datanode.BlockScanner: Initialized block scanner with targetBytesPerSec 1048576. 16/09/09 22:22:34 INFO datanode.DataNode: Namenode Block pool BP-531935505-192.168.56.1-288 (Datanode Uuid 964b90aa-8843-4eea-9f50-182e5bab213a) service to /127.0.0.1:9000 trying to claim ACTIVE state with txid=556 16/09/09 22:22:34 INFO datanode.DataNode: Acknowledging ACTIVE Namenode Block pool BP-531935505-192.168.56.1-288 (Datanode Uuid 964b90aa-8843-4eea-9f50-182e5bab213a) service to /127.0.0.1:9000 16/09/09 22:22:34 INFO datanode.DataNode: Successfully sent block report 0x18e88ecf05bac, containing 1 storage report(s), of which we sent 1.

The reports had 3 total blocks and used 1 RPC(s). This took 15 msec to generate and 286 msecs for RPC and NN processing. Got back one command: FinalizeCommand/5. 16/09/09 22:22:34 INFO datanode.DataNode: Got finalize command for block pool BP-531935505-192.168.56.1-288.

What is Hadoop? Hadoop is an open-source Java-based Framework which is intended for Distributed Processing through Distributed Storage Capability. Hadoop can store any kind of data and process that using the MapReduce programming model. Hadoop framework is designed to overcome hardware failures, so that there is no loss of work and data.

Windows 7 64-bit Torrent

Hadoop splits the data and distributes them across all the nodes in a cluster to process data in parallel to achieve data locality. Prerequisites:. Latest java JDK version.

hadoop-2.7.3.tar.gz Installation steps for Apache Hadoop 2.7.3: To install Hadoop in your windows machine, at first you need to download and install latest java JDK version and set JAVAHOME path as your java installation path, in my case its “ C: Java8 jdk1.8.0131” Then download Apache Hadoop 2.7.3 from this site Extract the downloaded file and then place it at any location in your machine. In my machine am placing it in D: drive. Navigate to the following location D: hadoop-2.7.3 hadoop-2.7.3 etc hadoop as shown below Open hadoop-env.cmd file in notepad and change the JAVAHOME path to your java installation path because to compile and build your applications you need to give java compiler location, so for that you need to set path as shown below. Note: if you are using the default java home(C: Program Files Java jdk1.8.091 bin) then you need to give your path with colons as “C: Program Files Java jdk1.8.091 bin”, if you’ll not give like this you’ll get an error.

Open core-site.xml file with notepad and configure default file system and access port number as shown below, you can just copy and paste the below lines and save it. Great Explanation.

STARTUPMSG: java = 1.8.0101./ 16/09/09 22:22:05 INFO namenode.NameNode: createNameNode 16/09/09 22:22:08 INFO impl.MetricsConfig: loaded properties from hadoop-metrics2.properties 16/09/09 22:22:08 INFO impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s). 16/09/09 22:22:08 INFO impl.MetricsSystemImpl: NameNode metrics system started 16/09/09 22:22:08 INFO namenode.NameNode: fs.defaultFS is hdfs://127.0.0.1:9000 16/09/09 22:22:08 INFO namenode.NameNode: Clients are to use 127.0.0.1:9000 to access this namenode/service. 16/09/09 22:22:10 INFO hdfs.DFSUtil: Starting Web-server for hdfs at: 16/09/09 22:22:10 INFO mortbay.log: Logging to org.slf4j.impl.Log4jLoggerAdapter(org.mortbay.log) via org.mortbay.log.Slf4jLog 16/09/09 22:22:15 INFO server.AuthenticationFilter: Unable to initialize FileSignerSecretProvider, falling back to use random secrets.

STARTUPMSG: java = 1.8.0101./ 16/09/09 22:22:13 INFO impl.MetricsConfig: loaded properties from hadoop-metrics2.properties 16/09/09 22:22:13 INFO impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s).

JoshKani79 27-Sep-17 4:01 27-Sep-17 4:01 I am using windows 10 64bit and trying to install 3.0, setting environment variables did not resolve this issue. C: hadoop-3.0.0-alpha4 binhadoop checknative -a 2017-09-27 19:03:21,206 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform. Using builtin-java classes where applicable winutils: true C: hadoop-3.0.0-alpha4 bin winutils.exe Native library checking: hadoop: false zlib: false zstd: false snappy: false lz4: false bzip2: false openssl: false ISA-L: false winutils: true C: hadoop-3.0.0-alpha4 bin winutils.exe 2017-09-27 19:03:21,486 INFO util.ExitUtil: Exiting with status 1: ExitException. Member 12487440 26-Apr-16 19:45 26-Apr-16 19:45 I followed this article for setting up hadoop. I got the following error. C: hadoop-2.3.0 binhadoop The system cannot find the path specified. Error: JAVAHOME is incorrectly set.

Daya nidhi 29-Feb-16 5:23 29-Feb-16 5:23 c: hadoop-2.3.0 binhadoop namenode -format DEPRECATED: Use of this script to execute hdfs command is deprecated. Instead use the hdfs command for it.

Exception in thread 'main' java.lang.NoClassDefFoundError: V Caused by: java.lang.ClassNotFoundException: V at java.net.URLClassLoader$1.run(URLClassLoader.java:202) at java.security.AccessController.doPrivileged(Native Method) at java.net.URLClassLoader.findClass(URLClassLoader.java:190) at java.lang.ClassLoader.loadClass(ClassLoader.java:306) at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:301) at java.lang.ClassLoader.loadClass(ClassLoader.java:247) Could not find the main class: V. Program will exit. Member 11878438 1-Aug-15 0:34 1-Aug-15 0:34 I've successfully implemented hadoop installation till point 2, got stuck at point 3. I'm facing 2 issues here 1.Have copied Recipe.java code from webstie and created the file.but when compiling its throwing error as shown below C: hworkjavac -classpath C: hadoop-2.3.0 share hadoop common hadoop-common-2.3. 0.jar;C: hadoop-2.3.0 share hadoop mapreduce hadoop-mapreduce-client-core-2.3.0.

This is a very basic question, I know, but I haven't find a satisfactory answer, so maybe someone can clarify. The driver is installed in my host,. Mar 11, 2016 - To use the enhanced virtual keyboard feature in a virtual machine, you must install the enhanced keyboard driver on the Windows host system. If you did not install the enhanced keyboard driver when you initially installed. If autorun is not enabled, or if you downloaded the installation software,. The Enhanced virtual keyboard feature is useful if you have a non-US keyboard. If the enhanced keyboard driver is not installed on the host system, VMware. Vmware enhanced keyboard driver download. The enhanced virtual keyboard feature provides better handling of international keyboards and. See Install the Enhanced Keyboard Driver on a Windows Host.

Windows 7 64-bit Iso

Member 11833982 13-Jul-15 5:02 13-Jul-15 5:02 c: hadoop-2. 3.0 sbinhadoop jar c: Hwork Recipe.jar Recipe /in /out 14/04/12 00:52:02 INFO client.RMProxy: Connecting to ResourceManager at /0. 0.0:8032 14/04/12 00:52:03 INFO input.FileInputFormat: Total input paths to process: 1 14/04/12 00:52:03 INFO mapreduce.JobSubmitter: number of splits:1 14/04/12 00:52:04 INFO mapreduce.JobSubmitter: Submitting tokens for job: job7690001 14/04/12 00:52:04 INFO impl.YarnClientImpl: Submitted application application7690001 14/04/12 00:52:04 INFO mapreduce.Job: The url to track the job: 14/04/12 00:52:04 INFO mapreduce.Job: Running job: job7690001 After the above line system does not go farther. It got stuck. Please help me. Last Visit: 31-Dec-99 18:00 Last Update: 19-Aug-18 6:29 1 General News Suggestion Question Bug Answer Joke Praise Rant Admin Use Ctrl+Left/Right to switch messages, Ctrl+Up/Down to switch threads, Ctrl+Shift+Left/Right to switch pages.

Upgrade To 64 Bit Windows 7

I have just began studying apache spark. First thing which i did was i tried to install spark on my machine. I downloaded the pre built spark 1.5.2 with hadoop 2.6. When i ran spark shell i got following erros java.lang.RuntimeException: java.lang.NullPointerException at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:522) at org.apache.spark.sql.hive.client.ClientWrapper.